What's real anymore? AI warps truth of Middle East war

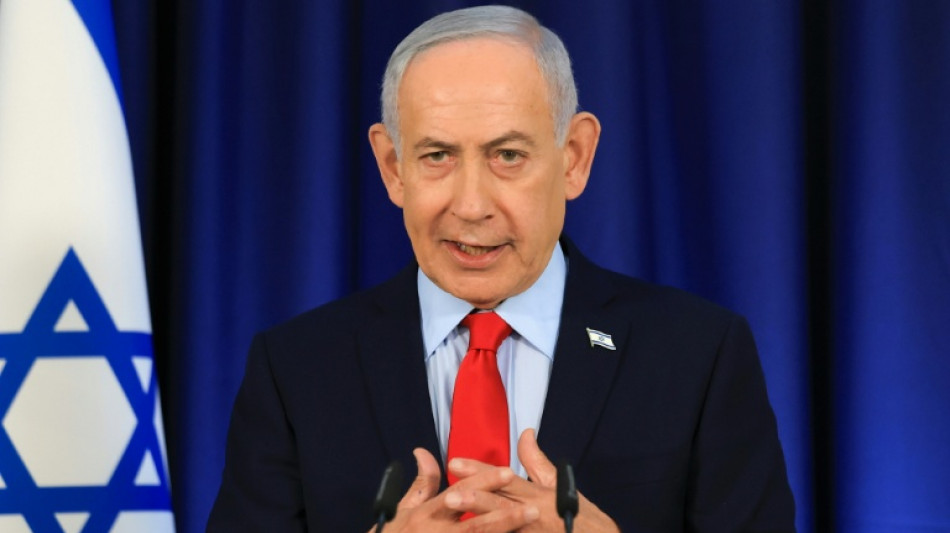

"Is Netanyahu real or AI?" an internet headline blared, pointing to a video that supposedly showed the Israeli prime minister with six fingers.

But the clip was real.

Speculation spiraled online that Netanyahu might be dead or wounded in an Iranian strike and that Israel was covering it up with a double generated by artificial intelligence.

"Last time I checked, humans usually don't have 6 fingers... AI does," said one post on X, garnering nearly five million views. "Is Netanyahu no more?"

Digital forensics researchers were quick to explain the "extra" finger: a trick of light that made part of his palm resemble an additional digit.

But that message was largely drowned out in the online uproar. It also mattered little that advanced AI visual generators -- now capable of churning out uncannily real-looking deepfakes within seconds -- have largely erased the once-telltale glitch of extra fingers.

So how do you prove what's real is real when the line between reality and fabrication has blurred so much in the fog of the Middle East war?

A few days later, Netanyahu posted another video -- a proof-of-life clip from a coffee shop.

He held both hands up as if to challenge skeptics to count his fingers.

But instead of quelling the speculation, the video fueled a new wave of unfounded theories.

"More AI," said one viral Threads post, questioning why his cup remained full after a large sip.

Suspicion reigned even after Netanyahu posted a third video, this one with the US ambassador to Israel, Mike Huckabee.

Some online sleuths zoomed in on Netanyahu's ears, claiming their shape and size did not match older images.

- 'Same footing as hearsay' -

AFP's global network has produced more than 500 debunks of false information in multiple languages since the conflict began -- a rate never before seen in such a crisis. Between a quarter and a fifth of them used AI.

The Russian invasion of Ukraine, the Israel-Gaza war and the conflict between India and Pakistan all triggered waves of AI-generated content.

What sets the Middle East war apart is the sheer volume -- and realism -- of AI images produced by advanced tools that are cheap and capable of eliminating many of the old signs of manipulation, researchers say.

Tech platforms are now saturated with what is widely dubbed "AI slop".

The result is a deepening crisis of trust as hyper-realistic AI fabrications compete for attention with -- and often drown out -- authentic images and videos.

"I think at this time we all need to start treating photos, video and audio on the same footing as hearsay," Thomas Nowotny, who leads an AI research group at the University of Sussex in the UK, told AFP.

The issue for Constance de Saint Laurent, a professor at Ireland's Maynooth University, "is not so much that people believe" disinformation, it is "that they see real news and they don't trust it anymore."

- 'Harmful content' -

The volume of fakes has largely outpaced the verification capacity of professional fact-checkers.

The work often feels like a game of whack-a-mole. Debunked claims routinely resurface across platforms awash with fakes, a pattern some researchers call "zombie" misinformation.

Algorithms amplify content based on engagement -- and engagement is often driven by sensationalism, outrage and misinformation.

Social media platforms "act as editors through what they decide to show to their users, primarily through their feed. And very often, that includes harmful content and misinformation," said Saint Laurent.

Financial incentives further accelerate the problem. Some platforms, including X, allow creators to earn revenue based on engagement, encouraging influencers to push misleading or entirely fabricated content for clicks.

According to the London-based Institute for Strategic Dialogue (ISD), a network of X accounts posting AI content about the Middle East war has amassed more than one billion views since the conflict began.

In another viral example, an X account posted an AI video appearing to show Dubai's Burj Khalifa skyscraper collapsing in a cloud of dust.

"10 million views and no Community Note. We cooked ya'll," information warfare analyst Tal Hagin wrote on X 20 hours after it was posted.

By the time a Community Note -- a crowd-sourced verification system, whose effectiveness has been repeatedly questioned by researchers -- was appended to the post a few hours later, the video had more than 12 million views.

Synthetic content has continued to proliferate on X even after the Elon Musk-owned platform announced that it would penalize creators -- suspending them from its revenue-sharing program for 90 days -- if they post AI war videos without a label.

- 'Legofication' -

Meme-driven AI content that trivializes conflict as it spreads misinformation is increasingly crowding out reality on digital platforms, in what ISD researchers call the "Legofication" of war propaganda.

A spoof Iranian AI "Lego Movie" went viral in the first week of the war, accusing US President Donald Trump of attacking Tehran to distract from his role in the Jeffrey Epstein scandal.

Lifelike meme videos have also been used to depict fictional Iranian military victories and even the strategic Strait of Hormuz reimagined as a cartoonish toll booth.

Trump has himself warned that AI has become a "disinformation weapon that Iran uses quite well."

"Buildings and Ships that are shown to be on fire are not — It's FAKE NEWS, generated by AI," he wrote on Truth Social.

Yet the US president has hugely embraced the technology, sharing AI-generated images and videos to portray himself as a king and Superman, while casting opponents as criminals or laughingstocks.

He has also used AI memes to fuel conspiracy theories and false narratives.

Meanwhile, coordinated information operations linked to Russia are exploiting the online chaos, impersonating trusted media outlets such as the BBC to spread falsehoods, according to the ISD.

- Inciting violence -

"We believe tech platforms are not currently doing enough to help users identify whether content is AI-generated or authentic," Meta's Oversight Board, the body created by Facebook to review content moderation decisions, said last month.

"Fake content can be harmful by inciting more violence and fueling further conflict," it added.

AFP works in 26 languages with Facebook's fact-checking program, including in Asia, Latin America and the European Union.

Meta ended its third-party fact-checking program in the US last year, with chief executive Mark Zuckerberg saying it had led to "too much censorship" -- a claim strongly rejected by proponents of the program.

Instead, Zuckerberg said Meta's platforms, Facebook and Instagram, would use the "Community Notes" model -- a move critics argue could further weaken safeguards against misinformation.

Meta's Oversight Board warned that expanding the model outside the United States could pose "significant human rights risks and contribute to tangible harms" to people living under repression or conflict.

- 'Liar's dividend' -

AI detection tools were meant to cut through the fog of the information war. Instead, they are sometimes making it denser.

In the Netanyahu case, conspiracy theorists pointed to an AI detection tool that falsely labeled his coffee shop video as "96.9 percent AI-generated." Other tools reached the opposite conclusion.

The problem extends beyond videos. Social media is rife with fabricated satellite imagery, heatmaps and other pseudo forensic visuals used to cast doubt on genuine evidence from the war, researchers say.

"The rise of AI deepfakes and the dismissal of real footage are two sides of the same coin," said Sofia Rubinson, of misinformation watchdog NewsGuard.

"When everything could be fake, it becomes easy to believe that anything is."

Social media users have falsely accused leading media organizations such as the New York Times of publishing AI-generated conflict images, including one that showed a large crowd in Tehran celebrating the new Ayatollah Mojtaba Khamenei.

Those who benefit from misinformation can easily exploit this -- a phenomenon researchers call the "liar's dividend," where genuine but unflattering information is waved away as AI-generated.

"Don't let AI technology undermine your willingness to trust anything you see and hear," said Hannah Covington, senior director of education content at the nonprofit News Literacy Project.

"That's what bad actors want: for people to think that everything can be faked, so they can't trust anything," Covington told AFP.

Signs of that shift are already visible, as fake images of real incidents further pollute the information landscape.

After a deadly strike on an elementary school in the city of Minab on February 28, an official Iranian account on X posted a photograph showing a child's backpack smeared with blood and dust.

AFP found the image was very likely AI-generated. But few online seemed troubled that a fabricated image had been used to depict the deaths of real schoolchildren.

"Likely AI edited, but the meaning is real," one Reddit user wrote.

burs-ac/fg/jhb/jfx

L.Lawrence--MC-UK